Shaved FAQ

What happened to your beard?

I shaved.

Have you lost a bet?

No.

Who are you? How did you get past security?

The same guy as last week and the months before that, I shaved, sorry to shock you, it happens.

Why did you grow a beard in the first place?

Because I felt like it.

Kids amplify your life

Three years ago my life was pretty normal, I had a house, a wife, a car and a decent job. Everything was nice and easy, very mundane. And then, my daughter was born.

Impact of kids

Every parent will tell you two things:

- After having kids your life as you knew it… gone

- But the life you have afterwards, is much more satisfying!

When I didn’t have kids and I heard people say this I thought they were just making it sound better than it was. But now I know, it is true.

The thing they never...

Stop the Rot, the Rules of Refactoring

A lot of times you hear experienced programmers talking about ‘smelly’ code. These ‘code smells’ are things that just look or feel wrong. Often programmers don’t immediately have a clear idea on how to fix it, but it ‘smells’! These smells often happen when the code ‘rots’.

Let’s do a quick check:

- Are there pieces of code you’d rather not change?

- Are (parts of) the application sometimes scrapped and rebuild from scratch (over and over)?

- Do pieces of code exist that turned out much more complex than you had initially imagined?

- Do you have pieces of code that feel out of place?

- Is it hard to break up some large classes and/or methods?

- Do you have a hard time coming up with names for certain classes?

Programming, testing, documentation

This week I’ve started work on a new project. This project has strict rules regarding documentation and testing. First of all, everything needs to be modelled in EA (Enterprise Architect), from use cases to the REST API fields. Next we need to perform the actual programming, and the testers write down their tests.

Realization

What is in the documentation?

- A description of who inputs what and what the results should be

What is in the code?

- A description of who inputs what and what the results should be

What is in the test?

-...

Devoxx BE 2014: Aftermovie

A couple of weeks ago was Devoxx in Antwerp again, the largest annual European Java conference. As always I was there, with my camera, to capture the amazing atmosphere:

We (me and my colleagues) had a great time, and learned some new things. But most of all, we met great people and received a lot of inspiration. This year I´ve done a short 5 minute Ignite talk on Mutation testing. This was my first ´Ignite´ session and it is hard! The format of an Ignite session is, 20 slides, 5 minutes,...

The Java 9 'Kulla' REPL

Maybe it’ll be part of JDK 9, maybe it won’t… but people are working hard on creating a REPL tool/environment for the Java Development Kit (JDK). More information on the code and the project is available as part of OpenJDK: project Kulla.

Some of you might not be familiar with the terminology ‘REPL’, it is short for Read Evaluate Print Loop. It is hard to explain exactly what it does, but very easy to demonstrate:

| Welcome to the Java REPL mock-up -- Version 0.23

| Type /help for help

-> System.out.println("Hello World!");

Hello World!

->Kill all mutants

The post below is the content from my 2014 J-Fall and Devoxx Ignite presentations. You can check you the slides here:

http://www.slideshare.net/royvanrijn/kill-the-mutants-a-better-way-to-test-your-tests

We all do testing

In this day and age you aren’t considered a real Java developer if you are not writing proper unit tests.

We all know why this is important:

- Instant verification our code works.

- Automatic future regressions tests.

But how do we know we are writing proper tests? Well most people use code coverage to measure this. If the percentage of coverage is high enough you are doing a...

Building Commander Keen on OS/X

Below is a build-log on how to build Commander Keen: Keen Dreams (which was recently released on Github) on OS/X using DOSBox and a shareware version found online.

Step 1:

Download and install DOSBox

Finding an image in a mosaic

Browsing the Ludum Dare (see previous post) website I found this post from a friend Will. He made the following mosaic:

Pretty interesting to see, I’ve already worked with him on improving the algorithm to generate these mosaics in the past. But next he set me a challenge: Find your own game thumbnail, it is in there somewhere!

This is the screenshot of my game, used as thumbnail:

So I went though the thumbnails, one time.. and a second time… then I decided to solve it...

Ludum Dare #30: A (dis-)connected world...

Last weekend the 30th Ludum Dare competition took place. For those us you unknown with Ludum Dare, this is a very short international game programming contest. You are allowed to use any tool or language but there are strict rules:

- The theme is revealed at the start (and the game must match this theme).

- You get 48 hours, nothing more or less.

- Every image, sprite, song and/or sound effect in the game should be made within these 48 hour.

- The result is open source (but you pick the license).

- You work alone.

(There is also a ‘Jam’ version where you...

I won't enter a teleportation device, ever.

In the future somebody will inevitably invent a teleport, no doubt about that.

But how will it work?

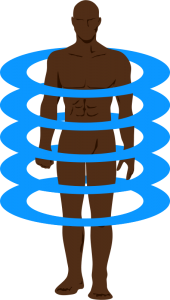

Digital teleportation

The most likely way to teleport would be to digitalise yourself. Some yet undiscovered very high resolution MRI/CT scanner will scan every atom in your body and send this over to the receiver. This atom printer will build up your entire body again.

However, during a work lunch discussion, I came up with some scary fundamental problems with teleportation.

Transactions

What would happen if, during transmission, we get a failure? We don’t want to...

Devoxx4Kids UK 2014: the video

The other thing I did while I was in London was volunteer and film at the first UK-based Devoxx4Kids.

Here is my video that sums it all up:

It was awesome being there, watching the kids play and learn at the same time. The volunteers were absolutely amazing (a lot of them!) and the atmosphere was very relaxed.

Devoxx UK 2014: the video

I’ve been filming again at Devoxx UK, here is the final cut:

Brings back so many great memories.

Including the night in the pub when ‘we’ won against Spain, 5-1

Review: Devoxx UK 2014

A year ago Devoxx crossed an ocean for the first time. After all the events in Belgium (Antwerp) and France (Paris) a new satellite event was launched in the UK (London). And here I am again, in a London hotel room, after two days of Devoxx UK.

Day 1: Getting started

With a silly one hour jetlag (which shouldn’t be a thing, but is…) I was awake and at the venue very early. Slowly but surely people started flooding in on the exhibition floor. It quickly became clear there were more visitors than last year. On the exhibition floor...

Raspberry Pi emulation on OS X

Disclaimer/spoiler:

Building for a Raspberry Pi in an emulator is just as slow as on the actual Pi. There is a slightly faster method involving chroot. But if you really want speed you’ll have to set up a cross compiler environment or try this other cross compiler setup.

Also: Links in the article below seem to be broken and it might not work anymore.

Original (outdated) article:

Today a colleague and I wanted to install gnuradio on a Raspberry Pi. This can than be combined with the amazing RTL-SDR dongle. This dongle this is...